Table of Contents

- Online Brand Abuse Trends Are Outpacing AI’s Detection Capabilities

- What AI-Powered Brand Protection Platforms Actually Solve

- 4 Reasons AI-Powered Brand Monitoring Still Requires Human Expertise

- How Human-In-The-Loop Models Make Brand Protection AI Enforcement-Ready

- A Disciplined Data Infrastructure Is the Missing Layer

- This Is a Wake-Up Call for Brand Protection Platforms

- AI-Driven Brand Monitoring with Human Intervention: FAQs

Counterfeiters are not waiting for brand protection AI to catch up. Blurred logos, AI-generated product images, rotating seller accounts, emoji-substituted brand names — the evasion tactics are evolving faster than the training data used to detect them.

In 2025 alone, the World Intellectual Property Organization (WIPO) managed 6,282 domain name disputes. Counterfeit goods trade hit $467B in 2024. That trajectory is not slowing down.

AI-powered brand protection platforms were built to monitor the internet at scale — and they do it well. What they cannot do is interpret context, apply regional legal judgment, or recognize a fraud pattern they have never been trained on. Every time a model encounters an evasion tactic it has not seen, it either misses the threat or flags an innocent seller. That gap has a cost — and bridging it requires more than a better algorithm.

Online Brand Abuse Trends Are Outpacing AI’s Detection Capabilities

Online brand abuse has not just increased—it has fundamentally changed in how it spreads, scales, and evades detection.

A few years ago, most counterfeit activity was concentrated on a handful of global marketplaces such as Amazon, Alibaba, and eBay. Today, the threat landscape is far more fragmented. Infringing listings now appear across rapidly growing commerce ecosystems like Temu, Shein, TikTok Shop, and regional marketplaces such as Meesho—each operating with different moderation standards, enforcement speeds, and seller verification policies.

At the same time, counterfeit distribution has shifted toward speed and decentralization. According to OECD’s data, around 65% of counterfeit goods seizures now involve small parcels and mail, reflecting a move toward fragmented, low-volume shipments that are harder to detect and intercept.

Digital infringement is also increasingly tied to content-driven discovery. Counterfeit products can spread through viral videos, influencer promotions, and social commerce posts long before enforcement systems identify the source listing. A fake product promoted through viral content can reach millions of consumers across multiple platforms within hours, significantly amplifying the impact of brand abuse.

This shift is already influencing consumer behavior. A 2025 study by MarqVision found that 31.8% of shoppers purchased a counterfeit product they first discovered on social media, while 71.6% believed the item they bought was authentic—highlighting how convincingly counterfeit distribution now blends into legitimate digital commerce.

As a result, modern digital brand protection must address multiple forms of infringement simultaneously, including:

- Omnichannel abuse across marketplaces, independent websites, and social commerce platforms: The same infringing product may appear simultaneously on major marketplaces, independent eCommerce sites, and social commerce channels, allowing sellers to reach wider audiences while making enforcement more difficult.

- Brand impersonation through fake accounts and misleading storefronts: Fraudsters create fake profiles, seller accounts, or storefronts that closely mimic legitimate brands. These accounts often use similar names, logos, and product images to mislead customers into believing they are purchasing from the official brand.

- Cybersquatting and domain-based brand misuse: Cybercriminals register domain names that closely resemble legitimate brand names—often with minor spelling changes—to host counterfeit stores, phishing pages, or misleading promotions that exploit consumer trust.

- Deepfake and AI-generated misuse of brand assets and identities: Advances in generative AI enable bad actors to create synthetic images, videos, or audio that imitate brand representatives, influencers, or official marketing materials, making fraudulent promotions appear authentic.

- Social media-driven distribution of counterfeit or unauthorized products: Counterfeit sellers increasingly promote their products through viral posts, influencer collaborations, and short-form video content, enabling them to reach large audiences.

The scale of these threats is evident across multiple indicators:

- Global trade in counterfeit goods is estimated at $467 billion annually.

- In 2025, the World Intellectual Property Organization managed over 6,282 domain name disputes, up from 4,204 in 2020.

- The U.S. Customs and Border Protection seized 32.3 million counterfeit items valued at $5.42 billion in 2024.

- EUIPO’s 2025 report found that 7,250 monitored websites and 398 mobile apps linked to IPR (intellectual property infringement) were actively generating advertising revenue—demonstrating how large-scale and monetized digital infringement ecosystems have become.

What AI-Powered Brand Protection Platforms Actually Solve

To address the complexity of online brand abuse, the market has increasingly adopted AI-powered Brand Protection Platforms (BPP). These platforms are built to solve one fundamental challenge: monitoring the internet at scale for potential brand abuse signals across marketplaces, websites, domains, and social platforms.

What These Platforms Typically Do:

- Crawl marketplaces, domains, and social platforms

- Detect suspicious listings using image and text models

- Identify brand keyword misuse and variations

- Track seller behavior patterns

- Generate risk scores and alerts

- Send automated takedown or DMCA notices

- Monitor minimum advertised price (MAP)

A platform can scan millions of listings far faster than a manual team and surface likely abuse patterns that would otherwise go unnoticed. However, many enterprises still overestimate what this automation actually solves.

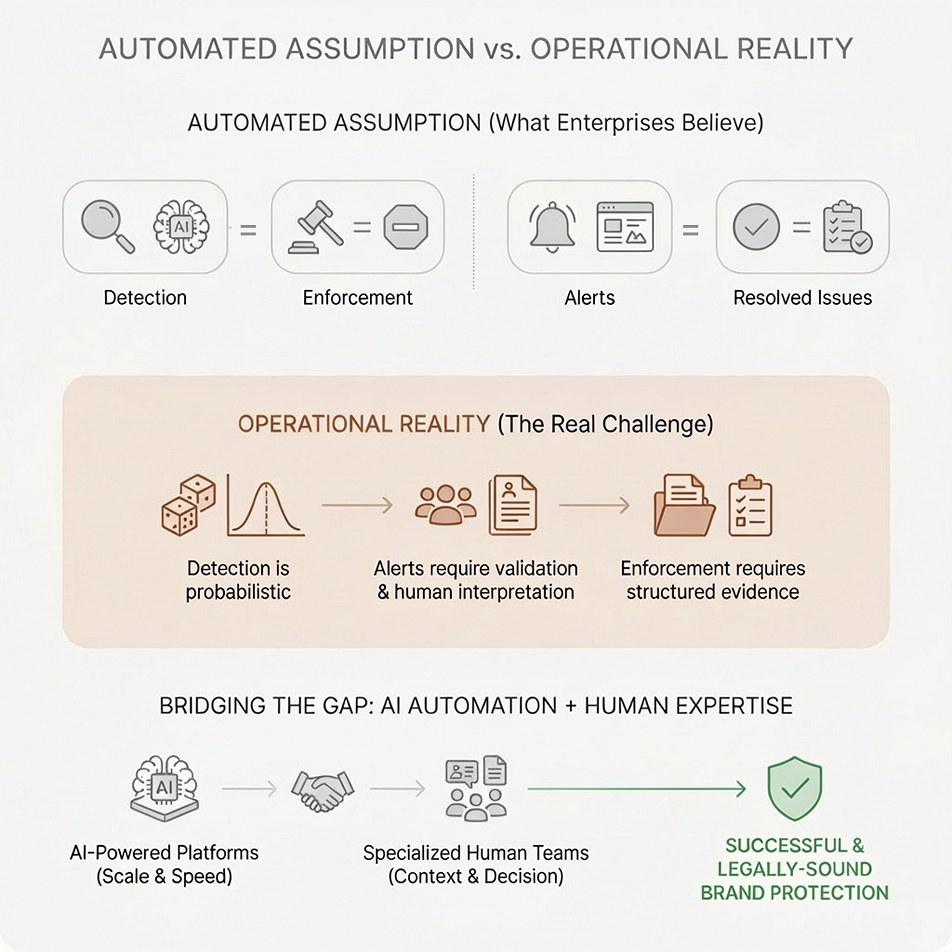

This creates a gap between what platforms detect and what brands can actually act upon. The real challenge lies in validating whether a signal represents a genuine infringement, adding the necessary commercial and legal context, and preparing enforcement-ready evidence for takedown actions.

4 Reasons AI-Powered Brand Monitoring Still Requires Human Expertise

Because pure-play AI is neither defensible nor reasonable.

The vast majority of trademark and brand protection practitioners (76%) prefer a hybrid “AI + Human” model over AI alone, according to The State of Trademarks 2025 by Corsearch. Top-tier platforms like Corsearch and Red Points recognize that while their engines can scan millions of signals (efficiency), the final ‘kill switch’ for a takedown still requires a layer of human legal reasoning to avoid the ‘false positive trap’—where an AI accidentally attacks an authorized reseller or a fair-use parody.

There are several other reasons why brand protection AI needs expert supervision and adequate data support.

1. AI Can Misinterpret Patterns for Intent

AI models used in brand protection software detect suspicious listings by identifying patterns learned from historical training data. Unlike human investigators, however, AI systems do not understand context or intent—they classify signals based on statistical similarities to previously identified violations. Because of this rule-based pattern recognition, AI can misclassify legitimate activity that resembles known infringement patterns.

For example:

- A legitimate reseller offering discounted products may resemble a typical counterfeit listing and be incorrectly flagged.

- A fan-run social media page using brand imagery may be misclassified as brand impersonation.

- A legitimate marketplace listing with reused product images could be flagged as a duplicate or counterfeit listing.

When enforcement actions are triggered solely by automated detection, such errors can damage relationships with authorized distributors and undermine trust in the brand’s protection processes.

Human analysts reviewing AI outputs provide the contextual validation that automated systems lack. By verifying flagged cases before enforcement actions, such as marketplace complaints or website takedown service requests, Brand Protection Platforms can ensure that detection signals translate into accurate, defensible decisions.

2. Scammers Evolve Faster than AI Models

Brand abuse operates in an adversarial environment—fraudsters actively study how detection systems work and continuously modify their tactics to avoid being flagged. Common evasion strategies include:

- Blurred or partially hidden logos

- AI-generated product imagery

- Misspelled brand names or coded language

- Rotating seller accounts across marketplaces

- Copycat domains that mimic legitimate websites

These shifts matter because brand monitoring AI performs best when the abuse resembles patterns it has already seen. Once fraudsters change the presentation, language, imagery, or distribution behavior, the model may no longer correctly classify the signal.

For example, instead of writing a brand name directly, a seller may use a near-match spelling, emoji substitutions, or platform-specific slang to avoid text-based detection. Likewise, a counterfeit seller may use synthetic product images that do not match known patterns of counterfeit photos, reducing the effectiveness of image-based matching.

Human analysts play a critical role in identifying new evasion patterns early. By manually reviewing flagged listings, websites, seller behaviors, etc., they can detect new evasion strategies and label them correctly. These verified examples are then fed back into training datasets, so that AI detection models can learn from the latest fraud patterns. This human-led learning loop keeps online brand protection solutions effective and aligned with evolving fraud tactics.

3. AI Cannot Build a Reliable Legal Enforcement Case

Detecting a potential violation does not automatically result in a listing being removed. Most marketplaces and domain authorities require verifiable, structured evidence before taking enforcement action. This is because takedown decisions must comply with platform policies, intellectual property regulations, and sellers’ due process requirements.

For example, platforms such as Amazon, Alibaba, and eBay require brands to submit detailed evidence of infringement when filing complaints. Enforcement requests must clearly demonstrate how a listing violates trademark, copyright, or brand policies. Evidence packages typically include:

- Screenshots of the infringing listings

- Side-by-side comparisons with genuine products

- Seller identity or account information

- Timestamped documentation of violations

Additionally, regulatory frameworks are becoming stricter. The EU’s Digital Services Act (2024) now requires platforms to proactively identify and remove illegal content, with penalties of up to 6% of global annual turnover for non-compliance.

This is where human intervention becomes critical. AI can flag suspicious listings at scale, but it cannot independently determine whether a case is complete, defensible, and ready for enforcement under platform-specific and legal requirements. Human reviewers are needed to verify the violation, interpret context, assess the quality of the evidence, and ensure the documentation is accurate, complete, and compliant. In other words, AI can accelerate detection, but human expertise is what turns a flagged signal into an enforcement-ready case.

4. Regional Law Breaks Automated Enforcement

Brand protection enforcement varies across regions, platforms, and legal systems, making it difficult to standardize automated decision-making. A listing that appears suspicious in one market may represent lawful gray-market resale in another, while platform-specific evidence thresholds and enforcement policies can also vary widely.

For instance, consider a premium Swiss watch brand, “Aurelius,” that sells its watches for $5,000 through authorized boutiques in New York. An AI brand protection engine scans a marketplace and finds a listing for a genuine Aurelius watch priced at $3,500, shipping from a small electronics exporter in Hong Kong. The AI notes a high-risk region, a 30% discount from MSRP, and a non-authorized seller name, and flags it as brand abuse.

In reality, that Hong Kong exporter bought “overstock” from a struggling authorized dealer in Italy six months ago. Under many international trade laws (such as the First Sale Doctrine in the US or Exhaustion of Rights in the EU), once a brand sells a physical product to a distributor, it often loses the right to control where that specific item is resold. That makes the seller a “grey-market reseller” and the listing perfectly authentic.

Similarly, enforcement standards and acceptable evidence requirements may differ between marketplaces and jurisdictions. Training AI models to fully capture these evolving legal and policy variations is difficult because such rules change frequently and are often interpreted differently across regions.

Human experts provide the contextual judgment required to evaluate these nuances. By applying regional knowledge, policy awareness, and case-by-case analysis, they ensure enforcement actions align with both brand policies and local regulations.

How Human-In-The-Loop Models Make Brand Protection AI Enforcement-Ready

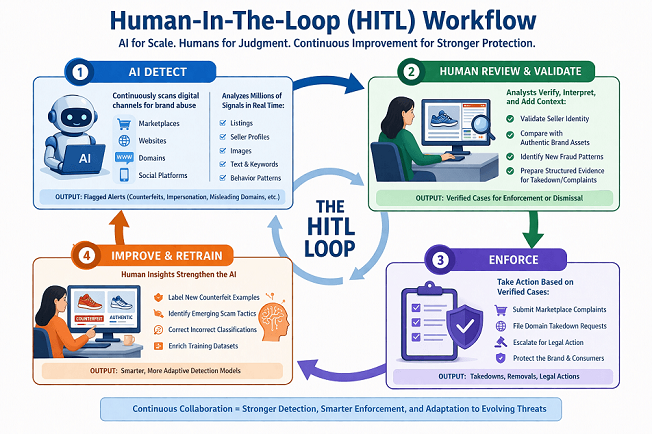

As digital brand abuse becomes more complex, many Brand Protection Platforms and agencies are adopting a human-in-the-loop (HITL) model—a hybrid approach that combines AI-driven detection with human expertise. This model recognizes that while AI excels at processing vast volumes of online data, effective brand protection still requires human judgment to interpret signals, validate violations, and guide enforcement actions.

In a typical HITL workflow, AI-powered brand monitoring systems continuously scan marketplaces, websites, domains, and social platforms to detect patterns that resemble known infringements. These systems analyze millions of listings, seller profiles, and digital signals in real time, flagging suspicious activity such as counterfeit product listings, impersonation accounts, or misleading domains.

Human analysts step in to review AI-generated alerts, verify whether the flagged activity truly represents brand abuse, and add the contextual information needed for enforcement actions. This includes tasks such as validating seller identities, comparing product images with authentic brand assets, identifying new fraud patterns, and preparing structured evidence for marketplace complaints or domain takedown requests.

Beyond validation, human experts also play a key role in improving the underlying AI systems. By labeling new examples of counterfeit listings, identifying emerging scam tactics, and correcting incorrect classifications, they continuously enrich training datasets and refine detection models. This feedback loop allows platforms to adapt their detection capabilities as fraud tactics evolve across marketplaces, social commerce channels, and regional digital ecosystems.

However, for this feedback loop to scale, the underlying data infrastructure must be as disciplined as the human analysis

A Disciplined Data Infrastructure Is the Missing Layer

This is where professional data services, including specialized AI training data support, preprocessing, and expert annotation, become essential. While human analysts provide the “judgment,” data services provide the “fuel” by converting raw, messy marketplace signals into high-quality, machine-readable inputs. Additionally, with specialized AI training data service providers that maintain in-house domain expert teams, the resulting training sets are inherently more resilient.

For example, if raw marketplace feeds contain duplicate listings, inconsistent seller naming, mixed image quality, or missing metadata, even a strong analyst team will spend too much time cleaning signals before it can evaluate them. Preprocessing and annotation reduce that friction by turning messy marketplace inputs into cleaner, machine-readable, and reviewer-friendly data.

When implemented effectively and powered by expert-led data services, the human-in-the-loop model enables Brand Protection Platforms to achieve several critical outcomes:

- Higher detection accuracy through contextual validation of AI findings

- Faster enforcement actions supported by verified evidence

- Reduced false positives, protecting legitimate sellers and partners

- Stronger legal defensibility in marketplace disputes and takedown requests

- Superior model performance driven by expert-annotated “high-fidelity” training data

This Is a Wake-Up Call for Brand Protection Platforms

For Brand Protection Platforms to move from “probabilistic flagging” to “confident risk identification and resolution,” it is imperative to bridge the gap between what your AI sees and what it does not, with:

- High-Fidelity Data Infrastructure: Standardizing how messy marketplace data is preprocessed to ensure model reliability.

- Domain-Expert Annotation: Injecting legal, regional, and sector-specific expertise into training sets to prepare models against sophisticated evasion tactics.

- Strategic Oversight: Ensuring that every automated action is defensible, compliant, and supportive of the brand’s broader commercial ecosystem.

As AI evolves and fraud tactics scale, competitive advantage in brand protection will go to the platform with the most disciplined data, not the one with the most data. By integrating specialized data services and human expertise into the AI lifecycle, platforms can finally turn the “noise” of millions of signals into the “signal” of reclaimed revenue and restored consumer trust.

AI-Driven Brand Monitoring with Human Intervention: FAQs

A human-in-the-loop (HITL) model integrates human analyst review into AI-driven brand monitoring platforms. Rather than relying solely on automated detection, HITL ensures that flagged signals are verified for context, intent, and legal defensibility before enforcement actions are taken — reducing false positives and improving takedown success rates.

No. While AI-powered brand protection platforms excel at scanning millions of signals across marketplaces and digital channels, they do not always have the right contextual judgment needed to distinguish legitimate gray-market resellers from actual infringers, interpret regional legal nuances, or prepare enforcement-ready evidence packages. Human oversight remains essential for accuracy and compliance.

AI models frequently miss new evasion tactics such as misspelled brand names, emoji substitutions, AI-generated synthetic product images, and rotating seller accounts. They also struggle to identify gray-market resale, fair-use content, and cross-platform distribution patterns that span multiple channels simultaneously.

Brand protection AI models are only as reliable as the training data they are built on. Inconsistent marketplace data, mislabeled annotations, or outdated fraud patterns directly degrade detection accuracy. But the problem does not stop at initial training. Because brand abuse operates in an actively adversarial market — where counterfeiters continuously change their tactics, imagery, and distribution channels — models drift over time as the gap widens between what they were trained on and what fraud actually looks like today. Without regular retraining on current, expert-annotated data, even a well-built model becomes a liability: confidently flagging patterns that no longer reflect real threats, and missing the ones that do.

Rohit Bhateja, Director - Digital Engineering Services & Head of Marketing

Rohit Bhateja, Director of Digital Engineering Services and Head of Marketing at SunTec India, is an award-winning leader in digital transformation and marketing innovation. With over a decade of experience, he is a prominent voice in the digital domain, driving conversation around the convergence of technology, strategy, customer experience, and human-in-the-loop AI integration.