An AI Solution to Combat Counterfeits Flooding the Market

Counterfeiting, web spoofing, fake listings, and product copies have witnessed a huge spike in recent years, impacting sales & growth of big brands in the global market. Identifying such online scammers from lakes of websites, marketplaces, and web portals is equivalent to finding a needle in a haystack.

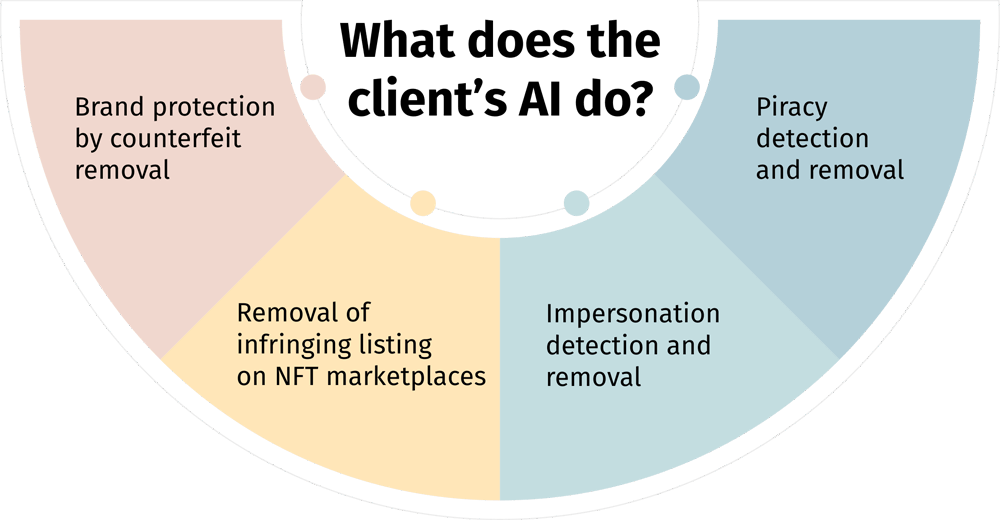

Our client is one of the leading revenue recovery companies with proprietary AI-based software. This tool crawls the web on behalf of businesses to detect IP (intellectual property) infringements. This platform allows our client to support its clientele by identifying copyright violations, product or brand impersonation, product or content piracy, counterfeits, and distribution abuse. With this unique proposition, our client currently assists over a thousand online brands in monitoring fraud and enables them to take necessary legal action backed by evidence to recover their revenue.

SunTec India helped discover and bridge the gaps in the client’s AI brand protection platform through multiple types of data support solutions.