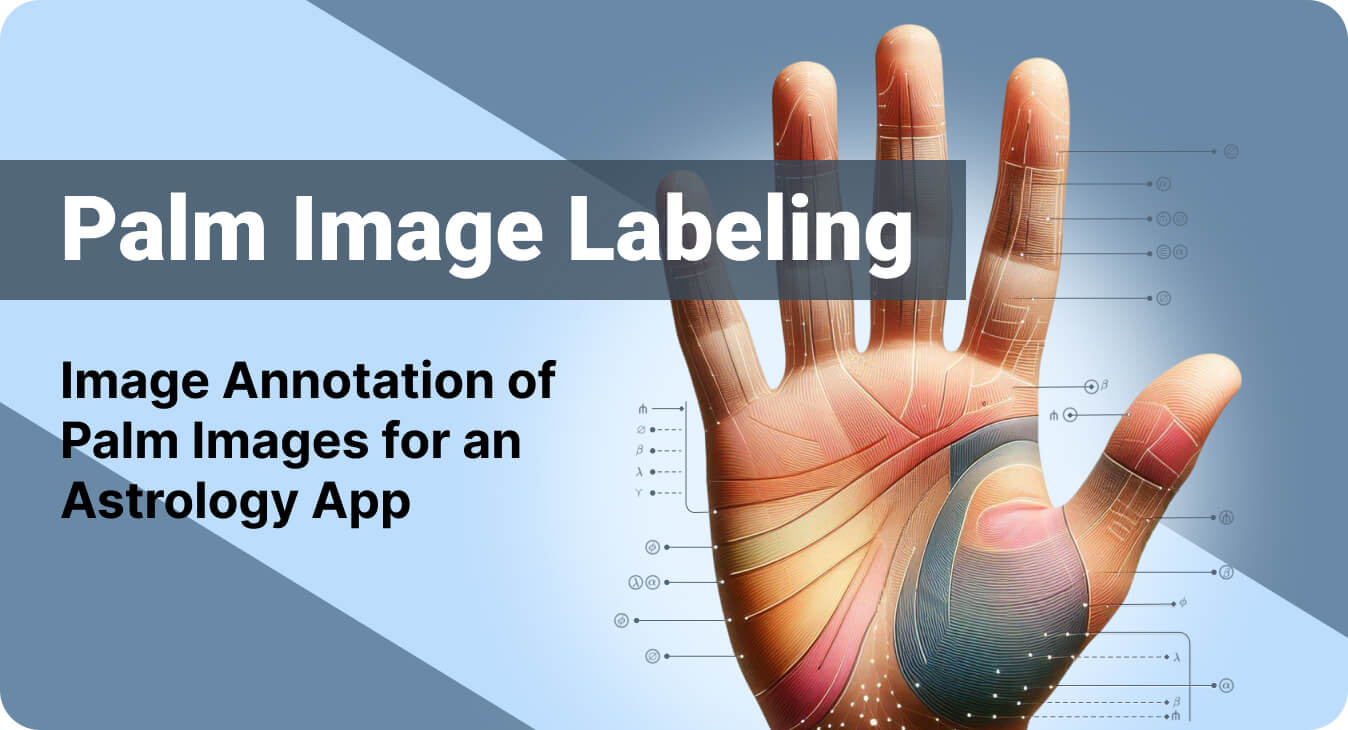

Helping an Al-powered astrology app improve palm reading accuracy by 25% through accurate image annotation

25%

Accuracy Boost in Application's Performance10000+

Images Labeled For AI Model's Refinement- Service Image Annotation Polygon & Polyline Annotaton Image Segmentation

- Platform LabelBox

- Industry Astrology

45%

Improvement in Object Detection Accuracy30%

Reduction in Operational Costs3000+

Images Annotated with Precision- Service Image Annotation Bounding Box Annotation Image Segmentation

- Platform CVAT

- Industry Government Sector

Succesful Model

Development with High-Quality Training DatasetsProfitable Operations

in Multiple Regions- Service Image Annotation

- Platform Client Platform

- Industry Technology

10K+

Images Annotated Monthly95%+

Labeling Accuracy- Service Image Annotation

- Platform QuPath

- Industry Agriculture (AgriTech)

15,000+

Images Annotated95%+

Annotation Accuracy- Service Image Annotation Services

- Platform Client’s Proprietary Annotation Platform

- Industry Wildlife Conservation / Energy

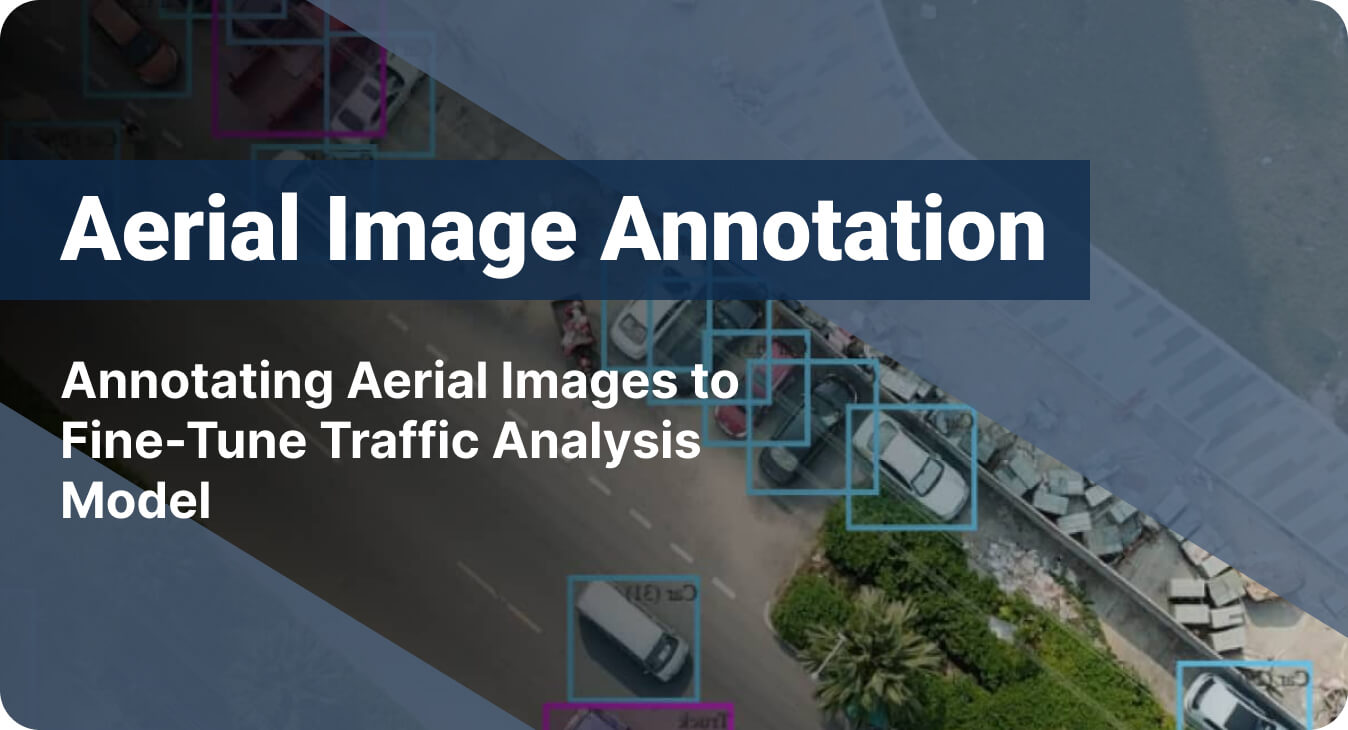

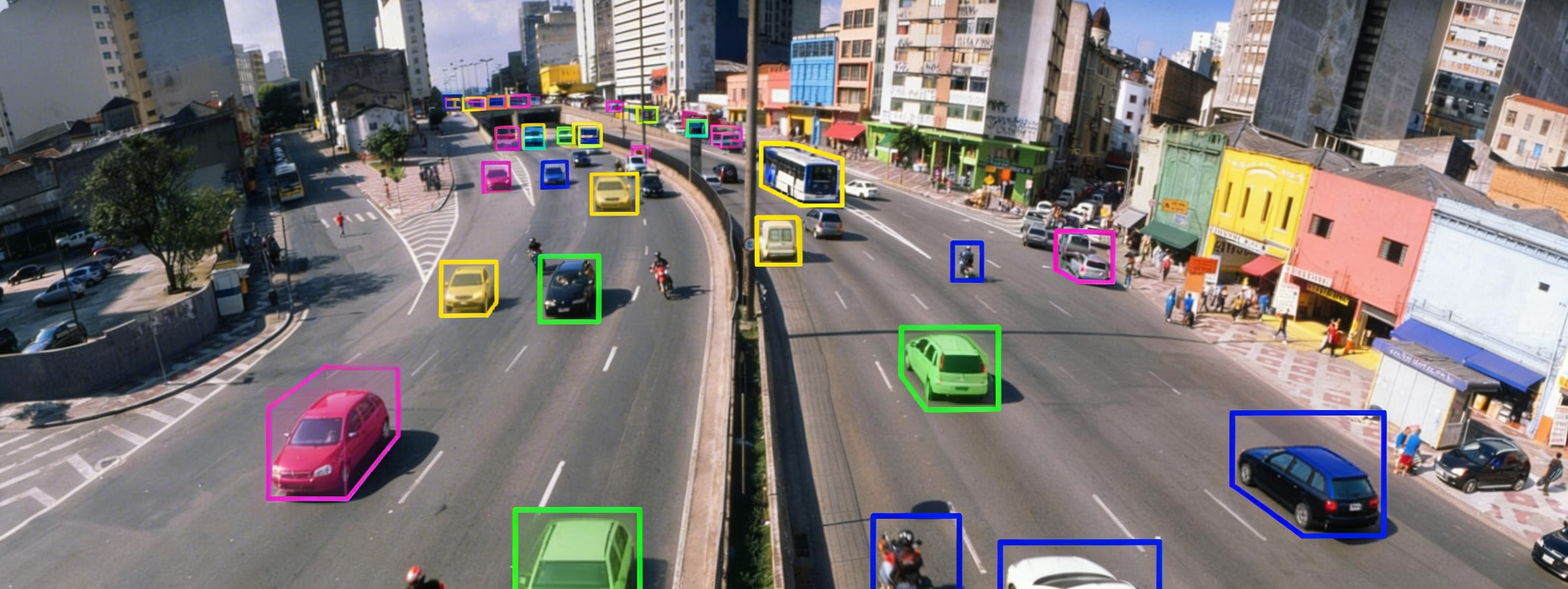

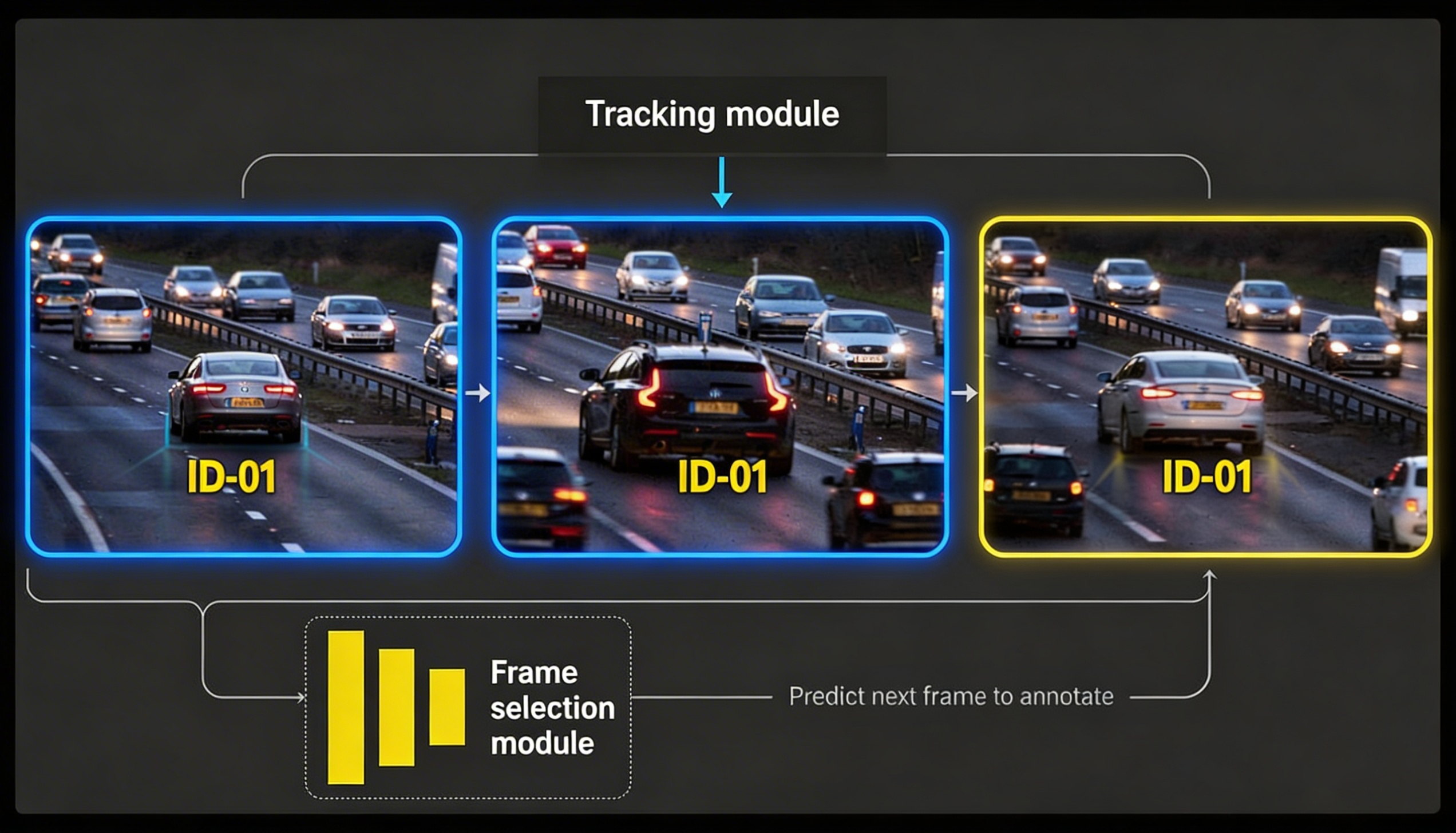

35%

Increase in Model Accuracy20%

Improvement in Traffic Flow Monitoring- Service Image Annotation Bounding Box Annotation Data Classification

- Platform CVAT

- Industry Urban Planning and Development

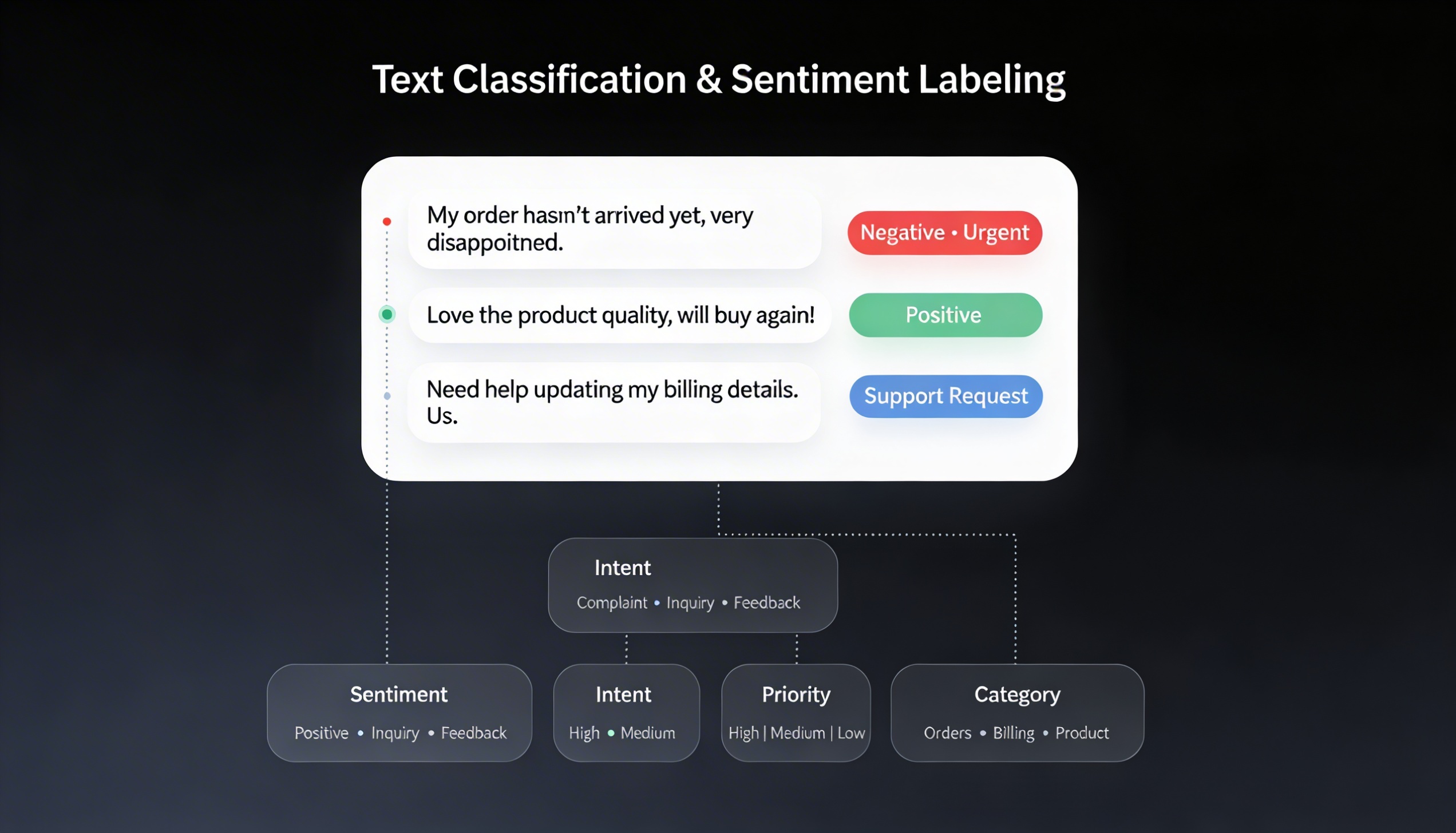

65%

Improved AI Model Accuracy60%

Less Content Categorization Errors4-Month

Faster Model Development- ServiceData LabelingText LabelingVideo LabelingWeb Research

- Platform Client's Predictive Content Intelligence Platform

- Industry Media and Entertainment