Our AI/ML experts improved response accuracy by training a GPT model according to specific client requirements.

Hire Deep Learning Experts

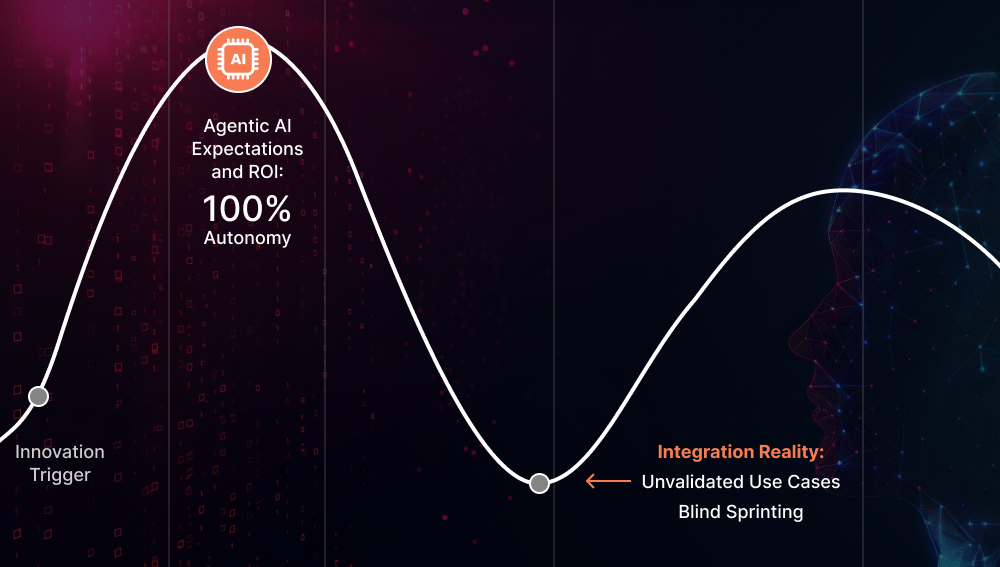

Transition from basic automation to autonomous decision-making.

While standard machine learning (ML) identifies trends in structured spreadsheets, Deep Learning (DL) interprets the complexity of the real world - processing unstructured data like video, raw sensor streams, and natural language with human-like nuance but at machine scale.

Why Enterprises Scale with Deep Learning?

Processing Unstructured Data At Scale

DL enables systems to extract actionable intelligence from petabytes of images, audio files, and multimodal sensor data.

Precision in High-Dimensional Environments

In sectors like BFSI or healthcare, DL models can identify non-linear patterns and anomalies that remain invisible to classical statistical models.

Building Autonomous AI Agents

DL powers cognitive engines capable of multi-step planning, tool-use, and self-correction to handle open-ended business workflows.

Domain-Specific Model Engineering

General-purpose AI often lacks the technical depth. Deep learning experts can fine-tune frontier LLMs on proprietary datasets for high accuracy and data privacy.

Managed Talent. Engineered for Accountability.

Dedicated Full-Time Engineers

FTEs only No freelancers or gig marketplace.

Experienced Talent

Vetted Experts Rapid Deployment

Managed Operations

Senior oversight Time & Task Monitoring

Workflow-Ready Integration

Jira Slack GitHub Teams

Global Overlap

All Time Zones 24/7 Support

Security

ISO 27001 & CMMI3 NDA & IP Secure

Send an Inquiry

Our Services

Comprehensive Deep Learning Development Services

Engineering Predictive Intelligence at Scale

Our deep learning experts provide the technical rigor required to move complex AI models from experimental notebooks into high-performance production environments.

Deep Learning Architecture and Strategy

Start your DL project with Technical Blueprints that align neural network selection with specific enterprise workloads and data constraints. Our deep learning engineers assess your data landscape, compute constraints, and business objectives to design architectures (CNNs, transformer models, GNNs, RNNs, etc.) tailored to your needs. We benchmark architectural choices against your latency, throughput, and accuracy requirements before training. The entire process is done within a private, localized environment to prevent third-party data leakage.

Deep Learning Model Development

Every model we build starts with a clear problem definition, not a default framework choice. Our deep learning developers build models from the ground up using frameworks like PyTorch and TensorFlow, or adapt frontier architectures (GPT-5.1, Gemini 3 Pro, Claude Opus 4.5, and Grok 4.1) to serve as your proprietary cognitive engines. We handle AI data processing, feature engineering, and model design in close collaboration with your stakeholders, delivering a production-ready model with clean code and comprehensive documentation.

Deep Learning Model Training

Training is where generic AI models are turned into tailored assets trained on your proprietary, real-world data, and also where most teams lose time and money. Hire deep learning engineers from us to manage End-to-End Training Pipelines using distributed frameworks like Horovod and PyTorch Distributed Data Parallel (DDP), leveraging GPU/TPU clusters to train at scale without runaway compute costs. We apply techniques such as Mixed-Precision Training, Learning Rate Scheduling, and PEFT (LoRA) for efficient domain adaptation.

Deep Learning Infrastructure & Ops Setup

A model that works only in a sandbox environment is not an asset, but a liability. Our deep learning engineers design and implement the MLOps infrastructure to move models from experimentation to production, using tools such as MLflow, Kubeflow, and DVC, while optimizing the hardware layer with NVIDIA CUDA. We also set up CI/CD pipelines for model deployment on cloud platforms (AWS SageMaker, GCP Vertex AI, or Azure ML). You get a robust, repeatable infrastructure that lets your team ship, monitor, and iterate on models with confidence.

Deep Learning Model Evaluation & Integrity Audits

High accuracy on a test set is not the same as a model you can trust in production. Our deep learning engineers conduct rigorous evaluations beyond standard metrics, running Adversarial Testing, Fairness Audits, and Distribution-Shift Analysis to surface failure modes. We also use SHAP (SHapley Additive exPlanations) to break "black box" decisions and provide human-readable, explainable insights for transparency. To verify high-stakes outputs and ensure the AI remains reliable under edge-case scenarios, we integrate Human-in-the-Loop (HITL) checkpoints.

Deep Learning Optimization & Performance Tuning

When inference is too slow, compute costs are too high, or model accuracy suffers, hire dedicated deep learning experts for optimization. Our engineers apply model compression techniques, such as Pruning, Quantization, and Knowledge Distillation, alongside hyperparameter optimization using tools like Optuna and Ray Tune to extract maximum performance from your existing models. We also optimize the execution graph to achieve sub-millisecond latency on target hardware. This ensures high-speed performance and significantly lower operational costs during large-scale model inference.

Support & Maintenance for DL Models

Deep learning models degrade silently; data distributions shift, upstream pipelines change, and what worked at launch slowly stops working. Our DL support and maintenance engagements can provide continuous oversight to ensure this doesn’t affect model performance. Hire remote deep learning developers for monitoring of drift and performance degradation, scheduled retraining pipelines, and dependency management as frameworks and infrastructure evolve. We also maintain living documentation, conduct periodic health checks, and provide SLA-backed responses for critical issues.

Scale Your Engineering Team with Our DL Experts

Skip the recruitment overhead and vet our specialized deep learning engineers for your specific stack: PyTorch, TensorFlow, or JAX.

Hire Now

How Do We Scope a Deep Learning Engagement?

Deep learning is not always the right tool, and we'll be the first to tell you that. Before any engagement begins, our deep learning engineers run a structured discovery to determine whether DL is warranted, viable, and scoped correctly.

Data Readiness

Is there sufficient labeled data to train a generalizable model? Or is few-shot learning or synthetic data augmentation needed first?

This tells us whether we're building from a stable foundation or need to solve a data problem before a modeling problem.

Task-Architecture Fit

Is this a structured prediction problem, a sequence modeling task, or a perception problem?

The answer determines whether we're looking at transformer-based architectures, CNNs, RNNs, or hybrid approaches

Compute & Latency Constraints

Will inference run on our servers with flexible SLAs, or does this need to be edge-deployed with hard latency budgets?

This guides DL model selection and tells us how aggressively we need to optimize for efficiency versus accuracy from the start.

Regulatory & Interpretability Requirements

What are your explainability requirements? Will model decisions need to be auditable?

This tells us whether interpretability is a feature of the model or a compliance requirement, a distinction that influences architecture decisions.

Deep Learning Architectures We Work With

We don't default to the most popular architecture. Our deep learning experts select the one that best suits the problem. Here's what's in our engineering repertoire and where each fits.

Convolutional Neural Networks (CNNs)

They are the backbone of computer vision engineering. Our deep learning specialists work with ResNet, EfficientNet, and DenseNet, each suited to different tradeoffs between model depth, accuracy, and compute efficiency.

Use case: Perception tasks where the DL model needs to recognize patterns, shapes, and structures within images

When we'd recommend it:

- Image classification

- object detection

- Medical imaging analysis

- Real-time computer vision-based surveillance

Graph Neural Networks (GNNs)

DL models designed for data where relationships between entities are as important. Our deep learning developers use GCN, GraphSAGE, and GAT based on the graph's scale and structure.

Use case: Domains where data is inherently connected, such as transaction networks, molecular structures, and organizational hierarchies. Also used where standard models would miss the signal that lives in the relationships.

When we'd recommend it:

- Fraud detection across transaction networks

- Drug interaction and molecular property modeling

- Knowledge graph reasoning

- Recommendation systems built on relational data

Vision Transformers (ViTs)

These DL models are a newer class of vision architectures that process images by breaking them into patches and analyzing relationships across the entire image, rather than scanning it region by region. Our deep learning engineers can work with all transformer-based DL models: ViT, DeiT, and Swin Transformer.

Use case: Visual tasks that require understanding the full image in context, not just local features. In other words, where a broader view of the image improves prediction quality.

When we'd recommend it:

- Large-scale image recognition

- Medical image segmentation

- Multimodal pipelines combining vision and language

- Clinical report generation from scan data

Recurrent Neural Networks (RNNs)

These DL architectures are built to process data that unfolds over time, maintaining memory of what came before as they move through a sequence. Well-suited for lightweight, real-time deployments. Our deep learning experts can work with Vanilla RNNs, Long Short-Term Memory (LSTM), Gated Recurrent Units (GRUs), and Bidirectional Recurrent Neural Networks (BRNNs).

Use case: Situations where order and timing matter, such as financial transactions arriving in sequence, sensor readings over time, or any data where the pattern only becomes meaningful in the context of what preceded it.

When we'd recommend it:

- Time-series forecasting

- Real-time anomaly detection in transactional data

- On-device or edge sequential inference

- IoT sensor data processing

Transformer-Based Language Models

This is the leading DL architecture for understanding and generating language. Our deep learning engineers work with BERT and RoBERTa for classification and extraction tasks, GPT-family models for generation, and T5 and BART for summarization and translation.

Use case: Any problem where the core input is text. For example, extracting meaning from documents, generating responses, classifying intent, or building search systems that understand language rather than just match keywords.

When we'd recommend it:

- Clinical NLP and medical document understanding

- Fraud narrative analysis in fintech

- Semantic search and document retrieval

- Domain-adapted text classification and summarization

Generative Adversarial Networks (GANs)

A generative DL architecture where two networks, a generator and a discriminator, train against each other to produce realistic synthetic outputs. Our deep learning developers are proficient in working with StyleGAN, conditional GANs, and CycleGANs, depending on the generation task.

Use case: Situations where real data is scarce, imbalanced, or difficult to collect, and high-quality synthetic data can stand in for it during training or augmentation.

When we'd recommend it:

- Synthetic data generation for underrepresented classes

- Medical image augmentation

- Cross-domain image translation

- Anomaly detection via discriminator scoring

Diffusion Models

A deep learning architecture that learns to reconstruct clean outputs from noise, producing high-quality, diverse samples that outperform GANs on image benchmarks. Our deep learning experts work with DDPM, Stable Diffusion, and fine-tuned task-specific variants.

Use case: High-fidelity generation tasks where quality and variety both matter, and where other generative DL models have struggled with consistency.

When we'd recommend it:

- High-fidelity image synthesis

- Rare condition dataset augmentation in healthcare

- Document image generation

- Generative pipelines requiring output diversity at scale

Autoencoders & Variational Autoencoders (VAEs)

Deep learning architectures that learn a compressed version of data by training to reconstruct their own inputs. VAEs add a probabilistic structure to that representation, enabling controlled generation.

Use case: Problems where understanding what's normal is more useful than labeling what's abnormal, making them a natural fit for unsupervised anomaly detection and data compression tasks.

When we'd recommend it:

- Anomaly detection in financial or operational data

- Dimensionality reduction on complex datasets

- Unsupervised representation learning

- Generative tasks requiring an interpretable latent space

Latest Blogs

Stay informed with the latest tech trends, AI updates, and expert opinions.

Tech Stack

- Core Frameworks PyTorch (Lightning) TensorFlow (TFX) JAX Keras

- Generative & Agentic AI LangGraph LlamaIndex Hugging Face (Transformers) AutoGPT

- Hardware Acceleration NVIDIA CUDA cuDNN Intel MKL Tensor Cores

- Distributed Training DeepSpeed Horovod PyTorch DDP Ray Train

- Inference & Edge Ops NVIDIA TensorRT Intel OpenVINO ONNX Runtime Apache TVM

- DeepOps & Lifecycle MLflow Kubeflow Weights & Biases DVC (Data Version Control)

- Data Pipelines Apache Spark DALI (NVIDIA Data Loading Library) Apache Kafka

Frequently Asked Questions

Hire Deep Learning Engineers: FAQs

When you work with our deep learning development company, you retain 100% ownership of the final model weights, architecture, and any proprietary data used during training.

Deep Learning typically thrives on massive datasets. However, if you have a small dataset and need, our deep learning developers can use Transfer Learning and Synthetic Data Generation to make DL viable.

To ensure regulatory compliance and enterprise trust, our deep learning development company integrates Explainable AI (XAI) techniques such as SHAP and LIME. These tools deconstruct complex decisions into human-readable insights, allowing your stakeholders to understand the "why" behind every model output.

A standard engagement follows a 3-stage lifecycle: Architecture & Strategy (a few weeks), Model Development & Training (12-14 weeks), and Optimization & Deployment (a few weeks). While timelines vary based on data complexity, we guarantee timely delivery. Contact us at info@suntecindia.com for a timeline estimate for your requirement.

While standard ML is highly effective for structured data (such as spreadsheets and databases), Deep Learning is engineered to interpret unstructured data, such as video, audio, and natural language. You can talk to our deep learning engineers to see which fits your use case.

Our deep learning development company offers both models to suit your project's lifecycle. You can hire a Dedicated Development Team that functions as a remote extension of your in-house staff—ideal for long-term, evolving R&D—or opt for a Project-Based Engagement with defined deliverables and milestones.