Modernized a legacy analytics platform and reduced its physical footprint through cloud migration.

Hire Data Migration Engineers

Comprehensive Data Migration Services

Explore the data migration services our experts provide. From planning to post-migration support, we help businesses move their data smoothly and safely.

Data Migration Strategy and Planning

Engineer a low-latency transition roadmap with our data migration consultant. We leverage the 7Rs of Cloud Migration framework to align technical execution with business continuity objectives. Our experts mitigate the risk of operational stasis by implementing Change Data Capture (CDC) protocols, establishing a real-time bidirectional synchronization between legacy source systems and modernized target architectures. By rigorously defining Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO), we also build automated idempotency and rollback triggers into the migration logic.

Data Mapping and Transformation

Standardize your disparate data assets with Source-to-Target Mapping (STM) documentation, which serves as the definitive logic gate for data migration. Our experts ensure absolute compatibility with target environments, including Snowflake, Google BigQuery, and Amazon Redshift. We optimize data distribution keys, sorting parameters, and partition strategies during the transformation layer for improved query performance and faster retrieval. By implementing rigorous data enrichment techniques and harmonization logic, we standardize and organize fragmented data for maximum consistency.

ETL Pipeline Development

Architect fault-tolerant ETL and ELT pipelines for automating data flow across hybrid or multi-cloud ecosystems. Hire data migration experts todesign modular data flows that incorporate strict idempotency and stateful checkpointing to ensure resilience against network latency or ingestion-layer failures. By implementing strategic partitioning, bucketing, and clustering at the target destination, we ensure that your data is successfully migrated and physically organized to maximize query pushdown performance and minimize compute overhead.

Data Cleansing and Quality Checks

Hire data engineers to fortify your target environment against downstream analytical drift. We implement automated data-cleansing heuristics to prevent "garbage-in, garbage-out" outcomes. Our experts use data quality frameworks such as Great Expectations or Deequ to establish programmatic data contracts that serve as automated circuit breakers within the migration pipeline. This proactive approach to anomaly detection and outlier remediation guarantees that your modernized data assets maintain the highest level of semantic accuracy.

Secure Data Migration Execution

Operationalize an impenetrable data transit layer by embedding enterprise-grade security protocols directly into the migration runtime. We leverage a zero-trust architecture, employ AES-256 block-level encryption and TLS 1.3 cryptographic protocols to secure data. For organizations operating within regulated frameworks such as GDPR, HIPAA, CCPA, and SOC2, we implement data masking, pseudonymization, and tokenization of Personally Identifiable Information (PII) to ensure compliance without compromising analytical utility.

Migration Testing and QA Validation

Hire data migration experts to validate the structural and functional integrity of your migrated data. Our engineers execute rigorous Schema Validation and Row Count Reconciliation protocols, with Bit-for-Bit parity checks to ensure 100% fidelity between source and target datasets. We also implement comprehensive User Acceptance Testing (UAT) and Performance Benchmarking to confirm that the new infrastructure meets or exceeds legacy latency thresholds and concurrency requirements.

Post-Migration Support

Stabilize your newly migrated data environment with dedicated post-migration support. Our engineers continuously monitor migrated datasets, ingestion pipelines, and downstream workloads to detect anomalies. We conduct rigorous record reconciliation, referential integrity verification, and pipeline health diagnostics to ensure that all migrated assets function exactly as intended. Through proactive monitoring and log analysis, we quickly resolve performance irregularities, query failures, or pipeline disruptions.

Managed Talent. Engineered for Accountability.

Dedicated Full-Time Engineers

FTEs only No freelancers or gig marketplace.

Experienced Talent

Vetted Experts Rapid Deployment

Managed Operations

Senior oversight Time & Task Monitoring

Workflow-Ready Integration

Jira Slack GitHub Teams

Global Overlap

All Time Zones 24/7 Support

Security

ISO 27001 & CMMI3 NDA & IP Secure

Send an Inquiry

Schedule a Discover Call for Cloud Data Migration

Consult with senior data migration engineers who specialize in cross-platform cloud data migration services.

Book a Call

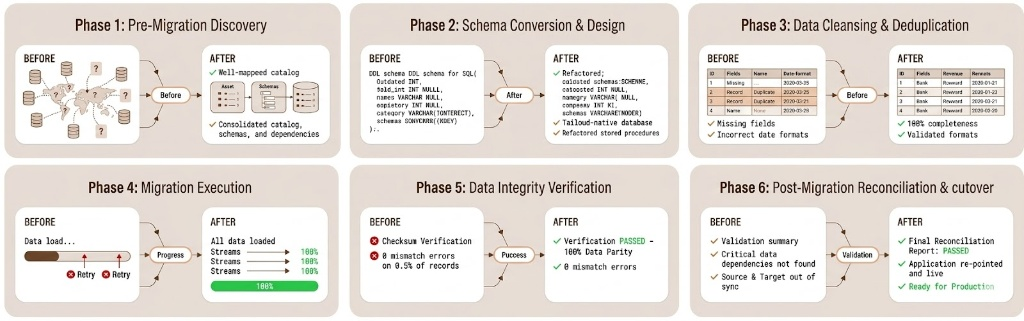

Structured Data Migration Framework for Data Integrity

Explore our systematic approach to complex data migration. We integrate platform-native logic, rigorous validation protocols, and follow a structured workflow that our engineering experts follow to ensure absolute data parity and performance.

Cloud Platform-Native Data Migration Services

Achieve seamless cloud data migration with architectures designed for performance. Our data migration specialist deploys platform-specific logic to eliminate technical debt and ensure long-term operational resilience.

AWS Cloud Data Migration

Hire AWS cloud data migration engineers to build scalable S3 lakehouses and Redshift clusters using AWS Glue, DMS, and SCT. By implementing Terraform-based infrastructure-as-code and automated schema conversions, we eliminate manual configuration errors. With our expert AWS data migration service, we deliver low-latency analytics and cost-optimized storage required to fuel enterprise-scale innovation.

Azure Cloud Data Migration

Hire Azure migration engineers to consolidate fragmented silos into Synapse Analytics or ADLS Gen2 environments. Our Azure specialists utilize Azure Data Factory and Databricks to build idempotent ETL/ELT pipelines with managed identities. Leverage our Azure data migration service for a secure single source of truth that slashes reporting cycles and ensures global regulatory compliance.

GCP Cloud Data Migration

Leverage our Google data migration service to architect ML-ready environments using BigQuery and Dataflow. We use the Google Data Migration Service for serverless, side-by-side migrations that minimize impact on the source. Hire Google Cloud platform engineers to convert stagnant databases into high-velocity assets, optimize your compute spend, and structure data for advanced predictive AI initiatives.

Multi-Cloud Data Migration

Hire multi-cloud data engineers for expert cloud data migration services. We maintain strict data parity across providers using Kubernetes-native architectures and Snowflake. By leveraging Apache Airflow for DAG orchestration, we prevent vendor lock-in and ensure your infrastructure remains agile. This enables you to dynamically shift workloads based on cost and performance requirements.

Tech Stack

Languages and core frameworks used by our data migration specialists.

- Cloud Migration Platforms AWS Data Migration Service (DMS) Azure Data Migration Service Google Database Migration Service AWS Snowball

- ETL / ELT Frameworks Apache Airflow Talend Informatica Azure Data Factory AWS Glue Matillion

- Databases (Source & Target) Oracle Microsoft SQL Server MySQL PostgreSQL IBM DB2

- Cloud Data Warehouses Amazon Redshift Google BigQuery Snowflake Azure Synapse Analytics

- Data Processing Frameworks Apache Spark Databricks Hadoop Apache Beam

- Data Integration & Replication Tools Fivetran Stitch Qlik Replicate Hevo Data

- Scripting & Programming Languages Python SQL Shell Scripting Java

- Data Validation & Quality Tools Great Expectations Apache Griffin

- Data Orchestration & Workflow Management Apache Airflow Prefect Luigi

- Security & Compliance Tools AWS IAM Azure Active Directory HashiCorp Vault

- Containerization & DevOps Tools Docker Kubernetes Jenkins GitHub Actions

- Monitoring & Observability Prometheus Grafana ELK Stack

Frequently Asked Questions

Hire Data Engineers: FAQs

Yes, our engineers have deep architectural expertise across all major cloud-based data migration services. Our AWS data migration service helps you migrate relational databases, the Azure data migration service helps with seamless SQL transitions, and the Google data migration service supports high-performance GCP integrations. We ensure your workloads are fully optimized for their respective cloud-native environments.

Our data migration company uses Change Data Capture (CDC) to maintain real-time synchronization between legacy and modern systems. By establishing a continuous data stream, we ensure the target environment is bit-for-bit compatible before the final cutover. This "trickle" methodology allows for a seamless migration, reducing the maintenance window to minutes and ensuring uninterrupted business continuity.

Our data migration experts implement security through AES-256 at rest and TLS 1.3 in transit. For highly regulated sectors, we also implement automated data masking and pseudonymization to protect PII. By leveraging secure VPC tunneling and granular IAM-governed access controls, we ensure your migration remains fully compliant with GDPR, CCPA, HIPAA, and SOC2 standards.

Our data migration company provides a dedicated data migration support phase immediately following the production cutover. Our team monitors real-time telemetry and query execution plans to identify latent anomalies. If a critical failure occurs, we trigger pre-defined, idempotent rollback protocols to restore the legacy state instantly, preventing data loss while we perform a root-cause analysis and remediation.

Absolutely. Our data engineers can optimize your architecture for downstream consumption. This includes refining indexing, implementing data lifecycle policies, and structuring schemas for high-dimensional AI/ML modeling. We ensure your data is partitioned and clustered correctly, providing a high-performance foundation for advanced analytics and AI initiatives.

Yes. Our engagement models are built for flexibility. Whether you need to accelerate a timeline by adding specialized ETL developers or scale down once the primary architectural phase is complete, we provide on-demand access to pre-vetted talent.